De-Dramatizing the Digital

In which AI becomes as interesting as your refrigerator

As I was watching Andor last year it occurred to me — Star Wars is a world without software. In fact, the two most enduring visions of the future in popular entertainment — Star Wars and Star Trek — are both worlds without software. There is firmware that powers the reticles of single-purpose machines, or all-powerful computers you can talk to, but they do not have anything like the iPhone with its myriad apps. Rarely if ever does anyone in Star Wars or Star Trek find their computers interesting. The droids in Star Wars are interesting because they’re your buddies. Those futures show technology stacks that have been completed — everything works well enough that no one needs to organize their life around the act of using it. You may need to kick the droid to get it to run properly, but no one complains about the state of technology broadly; in fact, no one talks about technology at all. Even in Star Trek, whatever tech they have usually needs to be operated more cleverly, and then it delivers. These completed tech stacks recede into the background, allowing daily existence to reassert its ancient claims: love, companionship, ambition, boredom. This is necessary for the drama, but it is also a clue to where we might head. This piece is an exploration of a hunch: that AI is a completing technology for our current stack, and that digital life will become less intense, not more — especially on the long timelines we have been exploring in the Contraptions Book Club.

On the present inconvenience

According to our Contraptions framework we are living in a special time — lucky us — called a Gramsci Gap1: the interregnum between eras when monstrous things happen because the old era — the Modernity Machine (MM)2 in Venkatesh Rao’s terminology — is dying and the next era — the Divergence Machine (DM)3, also his terminology — is struggling to be born. These historical machines are built for 400 years, ascendant for 400 years, and then fade for 400 years4.

Since 1600 the MM excelled at attention infrastructure: print, broadcast media, internet platforms — the whole apparatus by which a few centers of power organized the experience of many peripheries. As the MM enters “rapid, partially scheduled disassembly,” its infrastructure is not vanishing. But it is decaying, and there is nothing worse than decaying infrastructure: functional enough to trap you, too degraded to justify the trapping. The feeds still pull, but what they no longer do with any reliability is deliver (except financially, which they do in spades). We don’t scroll because the content is rewarding but because the infrastructure admits of no other interaction. A person marooned in a house with broken stairs will spend a surprising amount of time on the stairs.

The common assumption — held with more conviction than evidence — is that this condition worsens with each new technology. AI, by this account, should intensify our screen addiction, and certainly many of the preconditions for enshittification5 are present (the main one being that it costs more to deliver than we pay). But I suggest the opposite will occur over time: that AI will complete the technology stack in a way that renders it boring. And this is due to how it will make money over the course of the DM.

A brief excursion into the migration of profits

To explain why I think this, I need to combine a few ideas: one about social time and one about where profits go. Allow me to go off the deep end for a moment and illustrate by melding the pace layers of Stewart Brand with Clayton Christensen’s Law of the Conservation of Attractive Profits.

Stewart Brand’s pace layers model says that civilization operates in layers of different velocity — fashion changing daily, commerce monthly, infrastructure over decades, culture across centuries, nature on a longer timescale still. The fast layers attract the most attention, but the slow layers provide whatever stability we enjoy.

The Law of Conservation of Attractive Profits6 holds that when a layer in a value chain is not good enough, integration at that layer becomes necessary to optimize performance. Profits flow to the integrated layer, while adjacent layers become modular and commoditized.

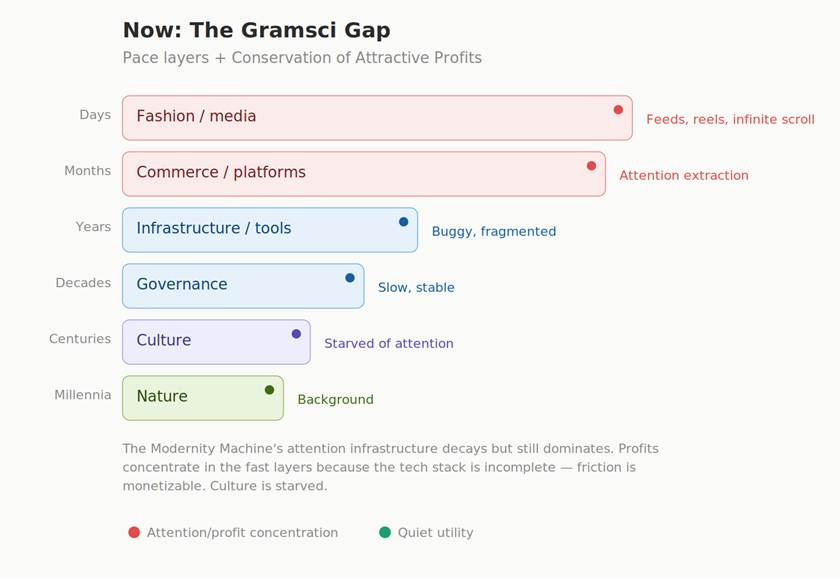

Consider our present situation in light of these two principles taken together. Our digital stack is incomplete. Our tools are fragmented, our formats incompatible, our files perpetually misplaced. Profits concentrate in the fast layers because those layers are not good enough, and our current digital infrastructure is fine for what it is: a commoditized layer that serves the currently integrated fast layers. The diagram below maps this dynamic — pace layers on the vertical axis, profit concentration marked by the red indicators. Notice where the attention collects.

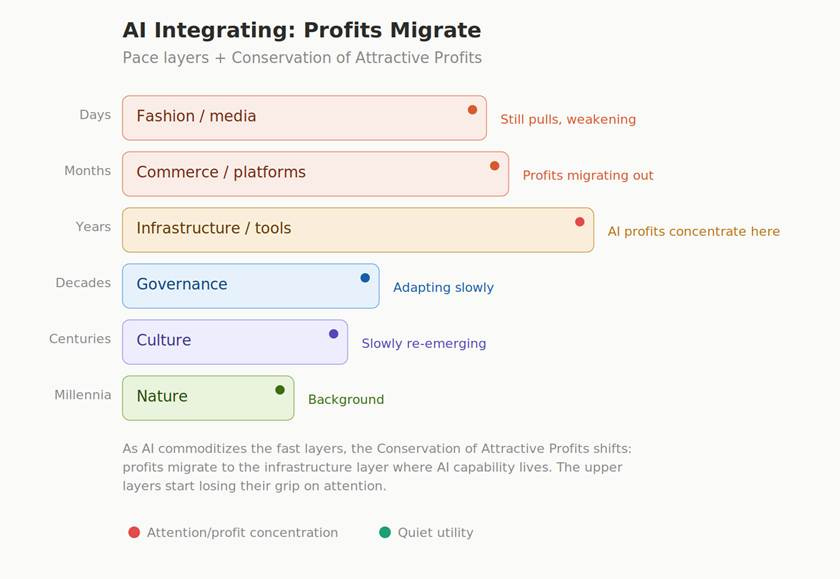

But as trillions go into AI investment, we will recognize just how inadequate our current infrastructure really is: fragmented tools, incompatible formats, unresolved friction everywhere. I expect that AI will become a new integrating force at that layer, which is why so much capital is pouring into it, and as AI matures and moves down the layers to become infrastructure, it will commoditize the upper layers. The need for this is already clear: AI curates the coming tsunami of slop and tames commerce with more focused connections between buyers and sellers. And the profits, obedient to Christensen’s law, will migrate downward — to the infrastructure layer where the new capability resides. The next diagram depicts this migration unfolding, and the shape of the stack shifting.

On the precedent of the printing press

It will be objected that new technologies do not reduce our engagement with them but increase it. People read more after the invention of printing, not less. This is perfectly true and perfectly irrelevant.

Elizabeth Eisenstein’s Printing Revolution in Early Modern Europe — which we read in the Contraptions Book Club last year — clearly demonstrates that while the printing press increased the volume of reading, it also de-dramatized the act of reading. Before printing, accessing a text was an expedition. One traveled to monasteries, bribed librarians, copied manuscripts of uncertain provenance by hand, and could never be entirely confident that the version one labored over bore any consistent relation to what the author had actually written. Knowledge work, in other words, was heroic. It was also, for this reason, unreliable.

After printing, the same work became routine. The infrastructure receded. More people read — vastly more — but reading ceased to be an ordeal, and intellectual energy could be redirected from the acquisition of knowledge to its application. Science, commerce, navigation, and the Reformation are all, in some measure, consequences of knowledge work becoming eased.

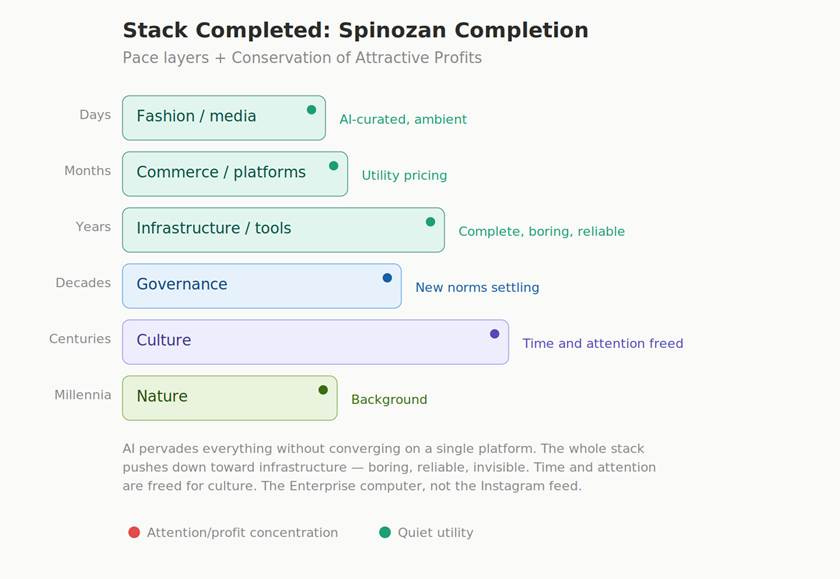

AI is to the digital stack what the printing press was to the knowledge stack. It will not reduce our use of digital tools. It will de-dramatize them. The file-hunting, the reformatting, the scrolling, the debugging — the ten thousand small frictions that currently constitute the texture of digital life — will be resolved not by any single platform but by a pervasive competence distributed across every tool we use. The ordeal disappears. The output proliferates. And we discover, perhaps with some surprise, that we have time for other things.

The courtier’s platform and the heretic’s infrastructure

There are, however, two quite different ways in which a technology stack may be completed. To explore this, we will dive into Matthew Stewart’s The Courtier and the Heretic, his dual biography of Leibniz and Spinoza (Book Club pick for March of this year), which turns out to be unexpectedly useful for thinking about our current technology predicament.

The Leibnizian completion is the one each Big Tech player is attempting. One platform. One ecosystem. One AI assistant that mediates every interaction. Pre-established harmony between your calendar, your correspondence, your social engagements, and your shopping — the best of all possible user interfaces. No plurality in the mechanics, no generative variety, no Darwinian competition. No divergence, and therefore no future in the new era. It is the Modernity Machine in a hoodie.

What Spinoza called natura naturans7 — nature in its active, generative aspect, the self-causing substance that expresses itself endlessly without settling into any final form — is what a Spinozan tech stack would look like: not a product to be consumed but a capability that pervades and generates. One substance — AI capability — expressing itself through infinite attributes and modes, without converging on a single platform or requiring a canonical interface. This is what AI will look like when it reaches the infrastructure layer.

The Leibnizian model, by contrast, is natura naturata — the finished, produced world. Leibniz in the book is always trying to save the world as it was from slipping into Spinoza’s stream and dissolving, making increasingly sophisticated efforts to shore up something solid when the deeper insight might be that there’s nothing solid to find. This is exactly the move Big Tech is making — shipping natura naturata, a finished product, when the underlying reality is natura naturans, an ongoing process that can’t be captured in a final release. There is no final version. There is only the ongoing expression.

On the advantages of boredom

There is a passage in Hobbes — Part II of the Leviathan, Chapter 21, “Of the Liberty of Subjects” — to the effect that an all-powerful sovereign can also be a limited one. The sovereign can do anything but needs to do relatively little: once the war of all against all is settled, the settlement itself recedes from daily consciousness. The sovereign’s power is what makes the sovereign boring.

Apply this to AI and you arrive at this proposition: the most powerful AI stack is the most boring one. Powerful enough to resolve the frictions that presently consume our hours, boring enough that it doesn’t monopolize our attention8. You describe the presentation you wish to make; the AI makes a compliant deck in ten minutes while you eat a sandwich. A great deal of what currently passes for “knowledge work” turns out, upon examination, to have been staring at PowerPoint while thinking. The thinking was the work. The staring was the overhead.

A reasonable objection here is the Jevons Paradox of attention9: when a task becomes ten times faster, the market often demands ten times the volume, and you end up staring at more PowerPoint, not less. This is a real pattern — email did not produce leisure, it produced more email — but I think it misidentifies what changes. After the printing press, Europe did not produce fewer texts; it produced enormously more. But the experience of producing them was de-dramatized. A sixteenth-century printer set more type in a day than a twelfth-century monk set in a year, but the printer did not experience their work as heroic. The volume increased; the drama migrated elsewhere. The question is not whether AI produces more decks but whether the act of producing them remains the organizing friction of your workday or becomes, like typing, something you do without thinking about the fact that you’re doing it.

I should say plainly that this is not “best of all possible worlds” optimism — not the complacency Voltaire satirized in Candide. It is something more modest: the digital world will become good enough to stop commanding our attention, in much the way that plumbing became good enough that we stopped thinking about plumbing. To continue with Voltaire, we can turn our attention to tending our own garden.

The cost of the alternative is worth stating plainly. If Leibnizian platforms dominate and sustain our current monopolies, we may extend the Gramsci Gap indefinitely, mistaking a transitional condition for a permanent one. There is a subtler danger as well. Even if AI reaches the infrastructure layer, it matters enormously how it arrives there. Infrastructure monopolies — AWS, payment gateways, chip manufacturers — can be just as extractive as attention monopolies, perhaps more so, because their extraction is invisible. You don’t resent your cloud provider the way you resent your social media feed, but it takes its cut of everything just the same. The danger is not that AI fails to reach the infrastructure layer but that it arrives as natura naturata — a finished product owned by three firms — rather than as natura naturans. The Gramsci Gap’s monsters don’t disappear in this scenario; they simply put on boring clothes and move downstairs. The Spinozan completion matters precisely because it resists this capture: a generative process expressing itself through many modes is harder to monopolize than a finished product shipping from a single source. If the Spinozan model holds, the end state looks something like this:

There is a deeper version of this concern, one that Brian Arthur’s The Nature of Technology helps articulate. Arthur argues that technologies never truly complete themselves — they structurally deepen, adding sub-systems to handle the problems their own operation creates. By this account, AI will not make the digital stack boring so much as it will stratify its complexity: simple and quiet on the surface, more intricate than ever underneath. The drama doesn’t disappear; it becomes specialized, experienced only by the shrinking class of people who maintain the infrastructure everyone else has been trained not to notice. This is already how electricity works — boring if you’re making toast, extraordinarily complex if you’re managing the grid. So the claim is not that AI will make technology boring in some absolute sense, but that it will make technology boring for most people most of the time, while concentrating its complexity — and therefore its power — in the maintainer layer. Whether that stratification remains legible and governable, or whether it produces a new priesthood of infrastructure lords, is a question the Spinozan model at least poses correctly, even if it does not guarantee the right answer.

On the length of transitions

The DM, by the accounting of our framework, has a four-hundred-year operating life. We are just twenty-five years into its deployment. For context, that span would carry you from today to roughly the early-25th-century era depicted in Star Trek: Picard. The MM created numerous complete stacks (e.g., electricity, plumbing, the combustion engine), but each completion took decades to propagate through the existing infrastructure. The printing press took a century to de-dramatize knowledge access across Europe. In that case, as profits do in the Christensen framework, the drama moved to another part of the value chain — spawning the Reformation, the Wars of Religion, and the Westphalian nation states.

The trajectory, as I see it: screens give way to voice, voice gives way to ambient computing, ambient computing gives way to companions — droids that we treat more like people than objects. Each step pushes the technology stack further down the pace layers, further from fashion, past infrastructure, closer to culture and perhaps even a new notion of nature.

I notice the early signs already. The conversations I have with Claude are of a quality I find genuinely stimulating — more so, if I am being honest, than all but the best of what I encounter scrolling through feeds — and they take a fraction of the time. High return for invested attention. This is what de-dramatized digital life feels like in its preliminary form: not less technology, but technology that has found its level.

And before I get accused of optimism — I am aware that in 2026, any suggestion that a technology might produce something other than misery reads as optimism — I’m sure there will be many suitors that will crop up to monopolize our time. As the printing press example suggests, de-dramatizing one layer tends to push the drama elsewhere. Where the new drama emerges — and it will emerge — is something we need to track over the course of the DM. This is not a return to pre-digital sociality, which would be nostalgia of exactly the kind our framework regards with suspicion. It is a new configuration, one that the DM has not yet produced because the Gramsci Gap’s monsters have been eating all the bandwidth.

My hope is that Star Wars and Star Trek are dispatches from the far side of a long transition we have only just begun — a transition in which the most revolutionary act may turn out to be making technology so powerful that it becomes, at last, perfectly boring.

From Gramsci’s Prison Notebooks: “The old world is dying, and the new world struggles to be born; now is the time of monsters.” The “time of monsters” phrasing is actually Slavoj Žižek’s gloss on the original (which reads “in this interregnum a great variety of morbid symptoms appear”), but it has become the canonical form.

The Modernity Machine is the civilizational operating system that dominated roughly 1600–2000, optimized for legibility, convergence, and canonical order — print, broadcast, nation-states, standardization.

The successor machine, constructed 1600–2000 alongside the deployment phase of the MM, is now entering deployment mode itself. Optimized for proliferation, variety, and coexistence without closure rather than for convergence and standardization.

The MM was constructed 1200–1600 and operated at a steady plateau of capability 1600–2000; the DM was constructed 1600–2000 alongside the MM and entered full deployment around the year 2000. See Rao’s World Machines Project for the complete timeline.

Cory Doctorow’s term for the lifecycle by which platforms first benefit users, then benefit business customers at users’ expense, then benefit only themselves before collapsing.

See Ben Thompson’s Stratechery essays on attractive profits, particularly “Netflix and the Conservation of Attractive Profits” (2015) and subsequent applications of the framework.

Spinoza’s distinction between nature as active, generative substance (natura naturans, “nature naturing”) and nature as produced, finished world (natura naturata, “nature natured”). Central to the Ethics.

I don’t want an all-powerful AI broadly, just one that is narrowly powerful over the digital friction in my life so that the friction stops organizing my days.

Economist William Stanley Jevons observed in 1865 that increased efficiency in coal use led to more coal consumption, not less, because cheaper use expanded demand. The “of attention” twist is the contemporary analog: making tasks faster often produces more tasks, not more leisure.

Amazing! I've heard about and wanted to read Brand's Pace Layers for a long time, but now have a much better understanding of what they are about and their significance. Also, I find a lot of what you are saying resonates with Benjamin Bratton's ideas in regards to The Stack, his vision of the developing planetary infrastructure of the current era. Are you familiar with it?

While reading your post it made me think about Battlestar Galactica (BSG). The Galactica was the only ship in the fleet to survive the initial Cylon attack—and the reason? Its systems were disconnected from the central network, unlike those of the rest of the fleet. Not so subtle commentary from the writers, am I right?!